Acta Paedagogica Vilnensia ISSN 1392-5016 eISSN 1648-665X

2025, vol. 55, pp. 222–239 DOI: https://doi.org/10.15388/ActPaed.2025.55.13

Jana Rozsypálková

Language Centre, University of Defence

Kounicova 65, 662 10 Brno, Czech Republic

jana.rozsypalkova@unob.cz

https://orcid.org/0000-0002-2959-3861

https://ror.org/04arkmn57

Eva Bumbálková

Language Centre, University of Defence

Kounicova 65, 662 10 Brno, Czech Republic

eva.bumbalkova@unob.cz

https://orcid.org/0000-0001-8206-7687

https://ror.org/04arkmn57

Abstract. Self-regulated learning (SRL) is a concept of great use for teachers of adult learners as these learners are especially capable of engaging in autonomous behaviour, and their understanding of aspects of SRL allows teachers to identify areas in which to provide support so that to make learning more effective. A validated instrument for a particular population is necessary to obtain reliable data on the basis of which SRL may be explored. In this study, we aimed to modify the Czech DAUS1 self-regulated learning questionnaire, based on the Motivated Strategies for Learning Questionnaire, the Learning and Strategies Study Inventory, and the Metacognitive Awareness Inventory, for adult learners in informal language education, and to validate the new instrument. First, items addressing aspects unrelated to informal education were modified or eliminated by an expert panel. Subsequently, exploratory factor analysis was conducted on a sample of 403 adult learners, resulting in a three-factor model. The factors included self-efficacy (14 items), metacognition (8 items), and motivation (8 items). The Cronbach’s alpha value of the new instrument was determined as 0.83. The new validated instrument ought to facilitate further investigation of SRL in adult learners in informal education.

Keywords: self-regulated learning, instrument validation, self-efficacy, learning strategies, motivation.

Santrauka. Savireguliacinis mokymasis (SRM) yra naudingas konstruktas suaugusiųjų mokytojams, nes tokie besimokantieji ypač geba elgtis savarankiškai, o supratimas apie SRM aspektus leidžia mokytojams nustatyti sritis, kuriose reikia paramos, kad mokymasis būtų efektyvesnis. Norint gauti patikimus duomenis, kuriais remiantis būtų galima tirti SRM, būtina turėti validuotą ir konkrečiai gyventojų grupei skirtą instrumentą. Šio tyrimo tikslas buvo modifikuoti ir validuoti neformaliajam suaugusiųjų kalbų mokymui skirtą Čekijos DAUS1 savireguliacinio mokymosi klausimyną. Klausimynas sukurtas pagal kitus instrumentus: Motyvuojančių mokymosi strategijų klausimyną, Mokymosi ir strategijų tyrimo aprašą bei Metakognityvinio sąmoningumo aprašą. Pirmame validavimo etape ekspertų komisija modifikavo arba pašalino su neformaliojo mokymo aspektais nesusijusius teiginius, vėliau atlikta tiriamoji faktorinė analizė 403 suaugusių besimokančiųjų imtyje, kuri padėjo išskirti tris faktorius: saviveiksmingumas (14 teiginių), metakognicija (8 teiginiai) ir motyvacija (8 teiginiai). Naujojo instrumento Cronbacho alfa koeficientas: 0,83. Validuotas instrumentas turėtų palengvinti tolesnius suaugusiųjų besimokančiųjų neformaliojo švietimo srityje savireguliacinio mokymosi tyrimus.

Pagrindiniai žodžiai: savireguliacinis mokymasis, instrumento validavimas, saviveiksmingumas, mokymosi strategijos, motyvacija.

_________

Received: 27/03/2025. Accepted: 01/10/2025

Copyright © Jana Rozsypálková, Eva Bumbálková, 2025. Published by Vilnius University Press. This is an Open Access article distributed under the terms of the Creative Commons Attribution Licence (CC BY), which permits unrestricted use, distribution, and reproduction in any medium, provided the original author and source are credited.

Nowadays, the concept of learner autonomy as “the ability to take charge of one’s own learning” (Holec, 1981, p. 3) is one of the key concepts in language teaching. Autonomy, according to Pawlak (2016, p. 60), should be particularly valued in adults, as they are “most capable of engaging in autonomous behaviours and receptive to a multitude of ways in which autonomy can be fostered”. While focusing on lifelong learning, it appears vital for teachers to acknowledge the specifics of teaching adults, considering their experience and offering support in areas such as motivation, self-direction, or learning strategies. Teachers ought to be aware of both the cognitive and non-cognitive aspects that can affect the process of learning in adults in general, as well as in second language acquisition.

As students’ experience is reflected in the way they approach learning, and as self-directed learning is typical for adults (Knowles, 2011, p. 38–39), the concept of Self-Regulated Learning (SRL) casts light on the entire process of learning and the various facets involved, namely, the cognitive, motivational, and emotional aspects of learning (Panadero, 2017, p. 1). Self-regulated learning is “an active, constructive process whereby learners set goals for their learning and then attempt to monitor, regulate, and control their cognition, motivation, and behaviour, guided and constrained by their goals and the contextual features in the environment” (Pintrich, 2000, p 453). Zimmerman (2000) created his influential cyclical SRL model on a socio-cognitive basis, emphasising the interaction between the environment, behaviour, and person levels of SRL. According to this author, there are three (cyclical) phases of SRL: forethought, performance or volitional control, and self-reflection, with each of the phases involving metacognitive processes, motivation, and utilization of strategies. The model of Winne and Hadwin also emphasises the cyclical nature of SRL, by perceiving SRL in four phases: task definition, goal setting and planning, enacting study tactics and strategies, and metacognitively adapting studying (Winne, 2011). Pintrich (2000) claims that SRL consists of four phases: forethought, planning and activation, monitoring and control, and reaction and reflection, with each phase also encompassing cognition, motivation or affect, behaviour, and context as areas for regulation.

Understanding various aspects of SRL can help teachers identify the areas where intervention could help students, while maintaining the focus on students’ autonomy and agency. Measuring SRL may reveal other important information; for example, SRL measures correlate with the measures of course or academic performance (Pintrich & de Groot, 1990; Zimmerman & Martinez-Pons, 1986; Rock, 2005; Credé & Phillips, 2011). According to Pawlak (2016, p. 60), “one of the priorities for teachers dealing with adult learners is to determine the level of their independence and take actions to extend it”.

SRL can be measured as aptitude, which means an attribute of a person that can predict their future behaviour. Measuring aptitude means concentrating on the third and fourth phases of Winne and Hadwin’s model (enacting study tactics and strategies, and metacognitively adapting studying), and it complies with the definition of SRL as “relatively stable individual inclination to respond to a range of learning situations in a typical way” (Boekaerts & Como, 2005, p. 207). Alternatively, SRL can be measured as an event, a temporary entity with a discernible beginning and an end (Winne & Perry, 2000, p. 534); here, the context and the task students complete play a vital role.

Various instruments can be employed to ascertain the level of SRL and its various aspects (cognitive, metacognitive, behavioural, motivational, and emotional/affective) in students. Instruments attempt to capture SRL in its complexity; nevertheless, including all of the aspects in a single measure appears a rather daunting task. Instruments used to measure SRL include questionnaires, structured interviews, teacher ratings, think-aloud protocols, trace methodologies, error detection tasks, and observation of performance (Winne & Perry, 2005). Questionnaires and structured interviews are typically used to measure SRL as an aptitude, abstracting from unique contexts of single tasks and offering a broader picture of a variety of aspects of students’ SRL, such as learner strategies, metacognition, motivation, etc.

According to Winne and Perry (2005, p. 542), the two self-report questionnaires used most frequently in research are the Learning and Strategies Study Inventory (LASSI; Weinstein et al., 1987) and the Motivated Strategies for Learning Questionnaire (MSLQ; Pintrich et al., 1991). Other measures used include, for instance, the Metacognitive Awareness Inventory (MAI; Schraw & Dennison, 1994), the Academic Self-Regulation Scale (Magno, 2011) – a validated instrument based on Zimmerman’s model of SRL, and the structured interview protocol Self-Regulated Learning Interview Schedule (Zimmerman & Martínez Pons, 1986).

MSLQ is based on Pintrich’s model of SRL. It is composed of 15 subscales falling under the wider umbrella of two scales: the motivational and the learning strategies scales. The motivational section, comprised of value, expectancy, and affect scales, is composed of 31 items. Learning strategies, which contain 50 items, are subdivided into cognitive strategies, metacognitive strategies, and resource management strategies. The MSLQ combines motivation with SRL and is the most widely used instrument in SRL measurement in higher education (Roth et al., 2016, p. 228). Credé and Phillips (2011) in their review focused on the associations between GPA/grades and learning strategies as measured by the MSLQ. The MSLQ has been translated into numerous languages, and has also been widely adapted for different contexts. The MSLQ has been criticised as the validity of its overall factor structure was never confirmed (Liu et al., 2019). De Araujo et al. (2023), in their attempt to validate the Brazilian Portuguese version of the MSLQ as a whole instrument, empirically identified the general factor of SRL, but confirmed four broad components (instead of the original two). The general factor, as well as twelve of the subscales, proved reliable; there were nevertheless three problematic areas where reliability was low: intrinsic goal orientation, elaboration, and metacognitive self-regulation. Sections of the MSLQ were validated and modified: Liu et al. (2019) used bi-factor analysis for the learning strategies scale and confirmed its multidimensionality (i.e., one general factor and two group factors). The authors reduced the number of items to 18. Dunn et al. (2012) used exploratory factor analysis (EFA) to verify two subscales, metacognitive self-regulation and effort regulation, arriving at a different structure of subscales, i.e., general strategies and clarification strategies. Researchers are aware of the incomplete validation of the MSLQ and create new instruments based on the scale or adapt the scale to serve their purposes, validating the new instruments (e.g., Soemantri et al., 2018; Chang, 2010; Hrbáčková et al., 2011; Ramírez Echeverry et al., 2016).

The LASSI instrument was developed in 1987 as a norm-referenced domain-general strategic inventory. There are several versions with minor differences: a high-school version of the LASSI was developed in 1990 (Weinstein & Palmer, 1990); there is also an online version (Weinstein et al., 2006); and the LASSI 3rd Edition was published in 2016 (Weinsten et al., 2016). The LASSI was designed for empirical and diagnostic purposes, with the objective of assessing students’ awareness and usage of learning strategies related to skill, will, and self-regulation. The third version comprises 10 scales, with each of them containing 6 items (as opposed to the 90 items in the first version). The scales include information processing, selecting main ideas, test-taking strategies anxiety, attitude, motivation, concentration, self-testing, time management, and study aids (renamed to using academic resources in the 3rd version). According to the LASSI: User’s Manual (3rd ed.) (2016), the inventory scales are reliable, and the factorial validity has been verified against similar measures. Cronbach’s alpha of individual subscales is above 0.76. Cano (2006) explored the LASSI’s construct structure. Data analysis revealed acceptable psychometric properties and suggested a three-factor model: the three latent constructs, i.e., Affective Strategies, Goal Strategies, and Comprehension Monitoring Strategies, were shown to be interrelated, and the first two were positively linked to academic performance. Interestingly, the areas of skill, will, and self-regulation did not appear as independent constructs. Marland et al. (2015) used a principal component analysis and expressed their doubts about the validity of the LASSI as a measure of study skills and learning strategies, as well as doubts about the referential frame (a norm provided by the LASSI manual), which reflects the United States context. The measure is widely used. In their review of 180 studies from over 20 different countries which had used the LASSI as an instrument, Fong et al. (2021) investigated the relationship between learning strategies and academic achievement, demonstrating that “the LASSI subscales and the total LASSI score were significantly and positively associated with most students’ academic outcome” (p. 13). The motivation subscale correlated most strongly with academic outcomes, while the study aid subscale showed the weakest correlation.

The Metacognitive Awareness Inventory (MAI) was developed by Schraw and Dennison in 1994 to measure metacognitive awareness in adolescents and adults. The instrument comprises 52 items. The inventory reflects the theoretically established components of metacognition: knowledge of cognition, and regulation of cognition (Schraw and Dennison,1994, p. 460), with these two broader components subsuming three and five subprocesses, respectively. Knowledge of cognition includes declarative knowledge, procedural knowledge, and conditional knowledge. Regulation of cognition includes planning, information management strategies, comprehension monitoring, debugging strategies, and evaluation. The authors compared the 2-factor and 8-factor solutions, and the results they obtained supported the 2-factor model. The instrument structure has been examined further: Akin et al. (2007) conducted an exploratory factor analysis and arrived at an 8-factor solution matching Schraw and Dennison’s intended theoretical structure. Harrison and Vallin (2018) used confirmatory factor analysis (CFA) and multidimensional random coefficients multinomial logit item-response modelling to examine the MAI structure, thereby supporting the two-dimensional model of the MAI, but showing that the 52-item instrument had a poor fit. They tested subsets of items which represented the theory and had a good fit. Their results support the use of a 19-item subset of the original MAI. Various versions of the MAI were developed for differing populations, e.g., a 24-item version of the MAI for Teachers was developed (Balçıkanlı, 2011), or two versions of the MAI for children, Jr. MAI were devised (Sperling et al., 2002). The MAI was also adapted and validated for use in different countries, e.g., Alotaibi (2024) confirmed the 2-factor structure of the shortened, 18-item Arabic version of the MAI using CFA, but arrived at a 3-factor model using exploratory graph analysis; Susnea et al. adapted the MAI for teachers for the Romanian context; EFA in their research yielded a 21-item two-factor model including knowledge of cognition and regulation of cognition. The MAI is used to investigate the level of metacognitive awareness as such (e.g., Masoodi, 2019) as well as to study various concepts in relation to metacognition, such as the motivation to learn (Siqueira et al., 2020), self-evaluation (Kallio et al., 2018), thinking styles (Zhang, 2010), or self-efficacy and use of learning strategies (Nosratinia et al., 2014). Young and Fry (2008) found correlation between the MAI results and academic achievement (cumulative grade point average and end of course grades). Schraw and Dennison (1994) discovered that the knowledge of cognition factor was “related to pre-test judgments of monitoring ability and performance on a reading comprehension test, but was unrelated to monitoring accuracy” (p. 460).

Measuring SRL by using self-reported questionnaires often requires an adaptation and validation of the questionnaire in a particular language. Moreover, university students (of varying subjects) are typical respondents of the studies: logically, at universities, data that predict academic performance are of great importance. However, working with other types of learners also requires reliable and valid instruments: researchers need to validate instruments adapted to their particular sample. While a version of LASSI for high-schools does exist, instruments specifically created for adult learners are scarce. Meijs et al. (2018) developed an MSLQ version for adult long-distance learners, which was further adapted, for example, by Zhou and Wang (2021) for Chinese adult long-distance learners.

In our study, we decided to adapt the existing version of the DAUS1 questionnaire (Dotazník autoregulace učení studentů [en. Students’ Self-regulated Learning Questionnaire]) as a validated questionnaire in Czech for university students, based on the MSLQ, the LASSI, and the MAI (Hrbáčková et al., 2011). DAUS1 was constructed by using the original 103-item DAUS questionnaire, which consisted of four subscales: motivation (32 items), cognitive strategies (13 items), metacognition (40 items), and environment and learning context (8 items). The properties of the DAUS1 are described below in the research method section; the DAUS1 comprises four subscales: goal orientation, self-efficacy, metacognitive strategies, and study value. The questionnaire was used, for instance, to investigate associations among motivational beliefs, self-efficacy, metacognitive strategies and meaningfulness of studies (as factors influencing the respondents’ self-regulated learning that contribute to the development of their academic self-concept) in entry level academic staff from the Czech Republic, China, and Spain (Balaban et al., 2019).

The DAUS1 appeared to be a suitable instrument for the purposes of our research as we aimed to establish the level of SRL in Czech adult English language learners in a precise manner. Our choice was guided by the structure of the questionnaire, as the items reflect the main aspects of SRL.

The aim of the study was to establish whether a modified DAUS1 scale was suitable for investigating SRL in adult learners and to verify the validity of the scale for an adult population in informal education.

DAUS1

The DAUS1 is an instrument for determining the level of SRL consisting of 40 items divided into four subscales: goal orientation (8 items), self-efficacy (16 items), metacognitive strategies (8 items), and study value (8 items); for the full version of the questionnaire, see Hrbáčková et al., 2011, p. 40–41. Overall Cronbach’s alpha is only available for the original version of the questionnaire (comprised of 103 items), with a value of 0.91. The instrument was created and validated for students in tertiary education. A 7-point scale ranging from ‘1’ as ‘least accurate’ to ‘7’ as ‘most accurate’ is used (Hrbáčková et al., 2011, p. 38).

The study was conducted as a part of a long-term research project of the Language Centre of the University of Defence, Brno, Czech Republic. The research population consisted of adult non-university students taking English language courses provided by the University of Defence Language Centre on behalf of the Ministry of Defence of the Czech Republic. The participants were military professionals.

A total of 403 participants took part in the research. A census was used: all students of military English courses organized between August 2022 and June 2023 were involved; 89% were male, 11% female. The average age was 38.2 years (range 23 to 58; SD=6.7).

Their educational background was fairly varied; see Table 1.

|

ISCED level |

Frequency |

Percent |

Valid Percent |

Cumulative Percent |

|

353 |

26 |

6.45 |

6.63 |

6.63 |

|

354 |

216 |

53.60 |

55.10 |

61.74 |

|

344 |

10 |

2.48 |

2.55 |

64.29 |

|

650 |

3 |

0.74 |

0.77 |

65.05 |

|

640 |

39 |

9.68 |

9.95 |

75.00 |

|

740 |

91 |

22.58 |

23.21 |

98.21 |

|

840 |

7 |

1.74 |

1.79 |

100.00 |

|

Missing |

11 |

2.73 |

|

|

|

Total |

403 |

100.00 |

|

|

Note. Classification according to the International Standard Classification of Education (ISCED) 2011-A. The first digit of the ISCED code indicates the level of education: 3 = upper secondary education; 6 = post-secondary or short-cycle tertiary education (approximately 3–4 years of study); 7 = tertiary education (approximately 5–6 years of study); and 8 = doctoral degree programmes. The second digit represents the category of orientation (general = 4, vocational = 5), and the third digit reflects the extent to which the programme or attained education enables further progression in studies (Národní pedagogický institut České republiky, 2015).

Data analysis was carried out in several stages. Expert opinion was used to assess the suitability of the items for students in informal education. The scale was modified and subsequently, and three cognitive interviews were performed to ascertain whether the items were sufficiently comprehensible to respondents. The modified scale was distributed to 45 military English course participants, 15 students from each level of English taught, i.e., levels 1, 2, and 3 in accordance with NATO STANAG 6001. The original Czech wording of the rest of the items, as well as the 7-point scale were used. Follow-up interviews were conducted with nine of the participants.

After the first stage, the questionnaire required minor modifications. The second version was distributed to 403 participants. Exploratory factor analysis was used to verify the validity of the questionnaire.

In the first stage of the research, an expert panel consisting of three qualified EFL teachers discussed the suitability of individual DAUS1 items for the research population. Three items were excluded as they addressed issues related to university study programmes. They were:

Three other items (Nos. 5, 7, 22) were modified to better reflect the nature of language-learning environment in informal education. The wording of the items was also discussed in three cognitive interviews. Two more items (Nos. 30, 37) were then modified based on the participants’ comments. For all modified items, see Table 2.

|

Item |

Original wording |

Modified wording |

|

5 |

I study expert literature of my own volition, even though it is not required. |

I actively search for new study materials to enhance my skills. |

|

7 |

I buy or borrow recommended books related to my studies because I want to understand the field even more. |

I buy or borrow recommended books because I want to understand what I learn in the course. |

|

22 |

I think that in my studies I am better than most of my classmates. |

I think that in my studies I am better than most of the students in my study group. |

|

30 |

I often ask myself if I did everything I could to understand the subject. |

I often ask myself if I did everything I could to understand what I am supposed to know. |

|

37 |

It is essential for me to understand the matter studied. |

It is essential for me to understand what I’m studying. |

Subsequently, we distributed the DAUS1 (without items 33, 34, and 36, and with modified items 5, 7, and 22) to 45 military English course participants, 15 students from each level of English taught, i.e., levels 1, 2, and 3 in accordance with NATO STANAG 6001. The original Czech wording of the rest of the items, as well as the 7-point scale, were used.

In preliminary analysis, the data revealed an overwhelming tendency of the respondents to opt for the middle category. In the nine follow-up interviews conducted afterwards, the participants explained that they found the minute differences between the points of scale confusing, and therefore frequently selected the option which required the least (cognitive) effort. Nemoto and Beglar (2014, p. 5) claim that neutral categories are not to be used as “Likert-scale categories should be conceptualized in the same way as physical measurement. If we look at a ruler, we find that there is no neutral category”. The authors also state (p. 5) that “[f]our points are desirable for young respondents and for respondents with low motivation to complete the questionnaire because 4-point scales are easy to understand and they require less effort to answer”. As the distribution of the data collected by means of the 7-point scale did not appear to reflect reality, we decided to condense the scale into a 4-point scale. Subsequently, we distributed the questionnaire and conducted statistical analysis to validate the new version.

The sample size of 403 meets the minimum requirements to have no fewer than 300 respondents, as specified by Field (2013) and Kahn (2006), which implies that the sample size should suffice for a robust and reliable EFA. Not only Gorsuch’s (2014) suggestion of a 5:1 case-to-variable ratio, but also Nunnally’s (1978) rule of thumb for sampling – i.e., to include at least ten times as many subjects as variables – have both been complied with, thereby further supporting the adequacy of the sample size. However, as Karami (2015) notes, the adequacy of the sample size is also contingent on the complexity of the model and the communalities of the items, rather than solely on the ratio of variables to subjects. According to Mundfrom et al. (2005), a minimum sample size of 350 is required to achieve the excellent level criterion, given a variables-to-factors ratio of five, broad levels of communality, and the extraction of up to six factors. Our data also meet these conditions, thereby ensuring the robustness of our sample size.

Given the complete randomness of the missing data, the missing data were treated through pairwise deletion to maintain the sample size. The detection of outliers and impossible values through descriptive statistics ensures the integrity of the data set.

The ordinal nature of the data, with a scale ranging from 1 to 4, suggests the EFA be based on polychoric rather than Pearson correlations (Watkins, 2018). The distributional properties indicating normality within the accepted ranges for skewness (-1.56–0.54) and kurtosis (-0.82–2.81), as outlined by Hair et al. (2010) and Byrne (2010), show that the data distribution should not adversely affect the factor analysis.

The reliability of the 37-item scale, as indicated by a Cronbach’s alpha of 0.78, denotes a sufficient level of internal consistency, which is crucial for the validity of the EFA results.

To confirm the factorability of the correlation matrix, Bartlett’s test of sphericity (Bartlett, 1954) was calculated. The statistically significant chi-square value (x² = 8005.983; df = 666.000; p <0.001) justifies the application of EFA to the data set.

Lastly, the Kaiser-Meyer-Olkin (KMO) overall measure of sampling adequacy is 0.831, which is considered excellent, and which indicates that the data are very well suited for EFA. Upon checking MSA for each item, one item which did not comply with the required value was found. Item 24 with MSA 0.550 performed as ‘miserable’ (Kaiser, 1974, p. 35). Nevertheless, at this stage, we decided not to exclude this item from analysis.

As the first step to extract factors, we ran EFA by using parallel analysis, based on factor eigenvalues, and the Principal Axis Factoring (PAF) method which focuses on the common variance among variables. The common factor model assumes that “the observed measures are interrelated because they share a common cause (i.e., they are influenced by the same underlying construct); if the latent construct was partitioned out, the intercorrelations among the observed measures will be zero” (Brown, 2015, p. 10). Since the data were not exact, we assumed that the underlying factors might be intercorrelated. Therefore, oblique rotation oblimin was employed, as recommended by Izquierdo & Abad (2014). Polychoric correlation matrix formed a part of the analysis.

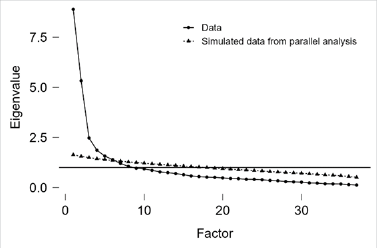

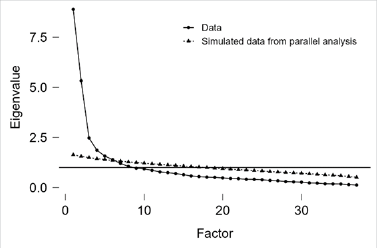

The analysis yielded a 7-factor solution, in which, the majority of items loaded on multiple factors; only 13 items loaded on a single factor. Upon the examination of the structure of the seven factors, no common concepts appeared to explain any of them. We further inspected the scree plot (see Fig. 1), which revealed three main factors ought to be considered in the following analyses.

To establish whether orthogonal rotation is a more suitable solution, we followed Tabachnick and Fidell (2007, p. 646) who state that:

Perhaps the best way to decide between orthogonal and oblique rotation is to request oblique rotation [e.g., direct oblimin or promax from SPSS] with the desired number of factors … and look at the correlations among factors … if correlations exceed .32, then there is 10% (or more) overlap in variance among factors, enough variance to warrant oblique rotation unless there are compelling reasons for orthogonal rotation.

For factor correlations, see Table 3.

Except for one correlation between F1 and F4, all correlations are below 0.32, thus providing a justification for orthogonal rotation.

In the subsequent phase, an orthogonal rotation varimax, which assumes uncorrelated factors, was employed to achieve a simpler and more interpretable factor structure. Considering the results of the scree plot inspection, the number of factors to be retained was manually set to three. Although the analysis yielded three factors explaining 41% of variance, item 34 (“It is essential for me to understand what I study”) loaded on all three factors, and items 22 (“I think that in my studies I am better than most of the students in my study group”), 24 (“When I finish writing my test I know how successful I was”), and 37 (“I believe that what I learn here will be useful in my work later”) did not saturate any factor. In addition, an in-depth examination of item content relatedness to the factors revealed items 8 (“I keep rereading my study materials (lecture notes, textbooks) at home”) and 35 (“I learn by finding links from various sources (lecture notes, textbooks, recommended reading)”) to be irrelevant to the factor content. Thus, these six items were excluded, and the number of items was reduced from 37 to 31.

|

|

Factor 1 |

Factor 2 |

Factor 3 |

Factor 4 |

Factor 5 |

Factor 6 |

Factor 7 |

|---|---|---|---|---|---|---|---|

|

Factor 1 |

1.000 |

-0.027 |

0.113 |

0.511 |

0.229 |

0.217 |

0.234 |

|

Factor 2 |

-0.027 |

1.000 |

0.298 |

0.040 |

0.254 |

0.148 |

-0.076 |

|

Factor 3 |

0.113 |

0.298 |

1.000 |

0.090 |

0.281 |

0.275 |

0.114 |

|

Factor 4 |

0.511 |

0.040 |

0.090 |

1.000 |

0.266 |

0.175 |

0.166 |

|

Factor 5 |

0.229 |

0.254 |

0.281 |

0.266 |

1.000 |

0.217 |

0.008 |

|

Factor 6 |

0.217 |

0.148 |

0.275 |

0.175 |

0.217 |

1.000 |

0.078 |

|

Factor 7 |

0.234 |

-0.076 |

0.114 |

0.166 |

0.008 |

0.078 |

1.000 |

Cronbach’s alpha for 31 items reached 0.73, which indicates sufficient internal consistency of the scale. Overall MSA = 0.847 suggests high suitability of the data for EFA. Compared to the previous data set, all items exhibit values of MSA above 0.73. The result of Bartlett’s test of sphericity was significantly smaller than 0.05 (x² = 6285.502; df = 465.000; p <0.001).

EFA conducted with 31 items resulted in an interpretable simple structure solution where “each factor is loaded by salient variables and each variable loads saliently on only one factor” (Brown, 2015; Gorsuch, 2014). A loading is considered substantial if it meets or exceeds a value of 0.4. Three factors explained 43% of variance. Factor 1 – self-efficacy (14 items) accounted for 19% of variance, Factor 2 – metacognitive learning strategies (8 items) accounted for 12% of variance, and Factor 3 – motivation (9 items) accounted for 12% of variance.

We examined the internal consistency of individual sub-scales. Cronbach’s alpha values were as follows: the self-efficacy subscale scored 0.87, the metacognition scale scored 0.83, and the motivation subscale scored 0.44. The subsequent examination of individual item reliability of the motivation subscale revealed that dropping item 7 (“I buy or borrow recommended books because I want to understand what I learn in the course”) would lead to increased reliability. Cronbach’s alpha for the motivation subscale reached 0.61 after item 7 was excluded. As Cronbach’s alpha is sensitive to the number of items (Briggs & Cheek, 1986), the MIIC (i.e., mean inter-item correlation) was calculated for the motivation subscale, reaching 0.16, which indicated that the internal consistency is acceptable (cf. Clark & Watson, 1995). Cronbach’s alpha for the 30-item scale increased to 0.83. EFA was then conducted for the 30-item scale to confirm the structure. Overall MSA = 0.849 showed high suitability of the data for EFA. All items exhibit values of MSA above 0.73. The result of Bartlett’s test of sphericity was significantly smaller than 0.05 (x² = 6023.360; df = 435.000; p <0.001).

Table 4 shows the factor characteristics. The loadings of the factors of self-efficacy (F1) and metacognitive strategies (F2) correspond with the original scale. The new factor of motivation (F3) comprises the original subscales of study value and goal orientation. For the factor loading matrix, see Table 5. Table 4 shows the factor characteristics.

|

Unrotated solution |

Rotated solution |

||||||

|

Eigenvalues |

SumSq. Loadings |

Proportion var. |

Cumulative |

SumSq. Loadings |

Proportion var. |

Cumulative |

|

|

Factor 1 |

7.578 |

7.018 |

0.234 |

0.234 |

5.794 |

0.193 |

0.193 |

|

Factor 2 |

4.854 |

4.326 |

0.144 |

0.378 |

3.868 |

0.129 |

0.322 |

|

Factor 3 |

2.236 |

1.694 |

0.056 |

0.435 |

3.375 |

0.113 |

0.435 |

|

Item |

Factor 1 |

Factor 2 |

Factor 3 |

Uniqueness |

|

|---|---|---|---|---|---|

|

14 |

0.715 |

0.430 |

I control my learning. |

||

|

16 |

0.709 |

|

|

0.496 |

I can tell which piece of information is the most important and which is less important. |

|

20 |

0.681 |

|

|

0.522 |

When I know what is challenging for me when learning, I can deal with it. |

|

13 |

0.679 |

|

|

0.449 |

I expect to succeed in my studies. |

|

19 |

0.645 |

|

|

0.518 |

I can manage my study time so that I succeed at the exams later. |

|

09 |

0.638 |

|

|

0.588 |

I can assess the requirements I am to fulfil in my studies. |

|

12 |

0.605 |

|

|

0.585 |

I can organize my study materials so that they are convenient when studying. |

|

10 |

0.604 |

|

|

0.623 |

I can tell which learning method is appropriate in a given situation. |

|

18 |

0.598 |

|

|

0.615 |

I believe that if I decide to succeed, I will. |

|

23 |

0.576 |

|

|

0.617 |

I often feel I do not understand anything and will not master anything. |

|

15 |

0.568 |

|

|

0.598 |

I do not give up easily, even when I do not understand something. |

|

21 |

0.557 |

|

|

0.679 |

I am not afraid to perform advanced tasks required for passing the exam. |

|

11 |

0.555 |

|

|

0.636 |

I know my strengths and weaknesses when studying. |

|

17 |

0.541 |

|

|

0.648 |

I have got a good memory. |

|

31 |

|

0.781 |

|

0.383 |

I often analyze or assess myself when studying. |

|

29 |

|

0.713 |

|

0.462 |

When learning, I test myself to find out how well I understand the material. |

|

28 |

|

0.700 |

|

0.483 |

Before I start learning, I say to myself what I am about to do (what to do first, what to do next). |

|

30 |

|

0.677 |

|

0.478 |

I often ask myself if I have done everything I could to understand what I am supposed to know. |

|

27 |

|

0.674 |

|

0.526 |

When learning new things, I often ask myself questions to find out how well I am doing. |

|

26 |

|

0.673 |

|

0.512 |

I often find myself stopping and checking that I understand everything |

|

32 |

|

0.494 |

|

0.654 |

I usually divide what I have to learn into parts which I learn one by one |

|

25 |

|

0.462 |

|

0.770 |

When learning I need to make sure that I am going in the right direction. |

|

06 |

|

|

0.715 |

0.417 |

I like to study. |

|

03 |

|

|

0.682 |

0.477 |

I study even if I do not have to. |

|

05 |

|

|

0.679 |

0.524 |

I actively search for new study materials to enhance my skills. |

|

01 |

|

|

0.581 |

0.624 |

I have to force myself to study. |

|

04 |

|

|

-0.539 |

0.617 |

Within my studies, I do more than I am asked to by the teacher. |

|

36 |

|

|

0.515 |

0.644 |

I see studying as my hobby. |

|

02 |

|

|

0.503 |

0.710 |

When learning, I often think about something else rather than what I am learning about. |

|

33 |

|

|

0.432 |

0.677 |

I find it useful to make an effort and study hard. |

Note. The applied rotation method is varimax. Only salient loadings (≥0.4) are displayed. Items 01, 02, and 23 were reversed.

In this study, we endeavoured to validate an adapted self-regulated learning questionnaire, by using the appropriate statistical methods. Following Watkins’s (2018) recommendations on the ways how to optimize the efficacy of ordinal data statistical analysis, it was crucial to utilize the polychoric correlation matrix to perform EFA. The widely used Pearson correlation matrix is often misapplied to variables which are not exactly numeric. Instead of the routinely applied PCA, common factor analysis was selected to identify a latent factor structure. PCA is only a data reduction tool, and the components do not provide any information about the underlying dimensions in a measure (Karami, 2015). Additionally, non-exact data require consideration of the oblique rotation employment, which is particularly well-suited for constructs where inter-factor correlations may exist. We adhered to the method best suited for our data.

The final exploratory factor analysis of the adapted validated instrument showed three factors: motivation, self-efficacy, and metacognitive learning strategies. The factors broadly correspond with Zimmerman’s (2000) model of SRL. The final factor structure does not fully align with the structure of the DAUS1, which comprised four factors (goal orientation, self-efficacy, metacognitive learning strategies, and study value). In our EFA, ‘study value’ and ‘goal orientation’ formed a single factor (motivation), which represents Zimmerman’s concept more accurately. Interestingly, these items also formed a single factor in the DAUS questionnaire (cf. Hrbáčková et al., 2011, p. 38), before it was validated and modified by Hrbáčková et al. into the DAUS1 version. Also, in the DAUS, self-efficacy formed a part of the section dealing with motivation; in contrast, in our study, self-efficacy stands alone as a factor (complying with the DAUS1 in this respect), demonstrating that motivation as a multi-layered construct is not easy to capture, and perhaps suggesting melding various instruments or sections of various instruments measuring such a construct into a single one ought to be done with caution, as it may pose an extra challenge as far as validation is concerned.

In our study, the original ‘study value’ factor presented the greatest problem – even prior to conducting EFA, upon closer qualitative examination, several items proved to be incongruous with the construct. Item 8 (I keep re-reading my materials (lecture notes, textbooks) at home) as well as item 35 (I learn by finding links from various sources) did not align with the remaining items loading the study value factor as far as their content is concerned, and appearing instead to be learning strategies, despite not loading the learning strategies factor. Our study demonstrates that it is highly advisable not to rely solely on the factor model that the statistical analysis has provided, and to examine the items loading a factor thoroughly to verify that they do not deviate from the remaining items in terms of the factual content.

To ensure the acquisition of valid data from our respondents, we reduced the number of response categories to four. On the grounds of having a lower number of such categories than in the original version of the DAUS, we expected the reliability to be slightly lower, in accordance with the finding of Preston and Coleman (2000, p. 5–6). The overall Cronbach’s alpha value of the scale our study validated is 0.83, thereby indicating good reliability. Preston and Coleman (2000, p. 13) also acknowledge the need to adjust the scales depending on the context of the research, as well as the respondents’ preferences (p. 4). Our respondents were primarily male, and the gender effect, as observed in survey participation and nonresponse across a number of studies (Becker, 2022, p. 4; Green, 1996, p. 176), might have manifested in the respondents’ unwillingness to answer the questions using a 7-point scale, leading to a condensed 4-point scale. The reduced number of response categories in our questionnaire might be limiting for research that would concentrate on the finer points of SRL.

We adapted the questionnaire for a specific population, notably, Czech adult learners in informal education. Our respondents were adults with varying formal educational backgrounds; all of them work for the military, even though their specific professions are different. Having a validated instrument for measuring SRL in adult learners in informal education is an asset for future research.

The aim of our study was to validate an adapted version of the DAUS1 questionnaire for SRL for a specific group of students – Czech adult learners in informal education, more specifically, in foreign language courses.

After minor qualitative adaptations, we conducted EFA and arrived at a three-factor model, with the factors being motivation, self-efficacy, and metacognitive learning strategies. The Cronbach alpha reliability coefficient indicates acceptable reliability. The adapted questionnaire was validated successfully and is suitable for investigating SRL – specifically, self-efficacy, metacognitive learning strategies, and motivation – in adult learners.

The population for which the questionnaire was adapted, i.e., adult learners in informal education, appears to be underrepresented in SRL studies. When investigating SRL in adult learners, most studies focus on university students. This new valid instrument should facilitate further research in SRL in the context of lifelong education.

Jana Rozsypálková: conceptualization, methodology, formal analysis, investigation, writing – original draft, writing – review and editing.

Eva Bumbálková: conceptualization, methodology, writing – original draft, writing – review and editing.

References

Akin, A., Abaci, R., & Çetin, B. (2007). The validity and reliability of the Turkish version of the metacognitive awareness inventory. Kuram ve Uygulamada Eğitim Bilimleri, 7(2), 671–678.

Alotaibi, A. S. (2024). The factor structure of the Arabic version of the metacognitive awareness inventory short version: Insights from network analysis. Metacognition and Learning, 19, 661–679. https://doi.org/10.1007/s11409-024-09384-z

Balaban, V., Koribská, I., & Chudý, Š. (2019). The Associations Among the Academic Self-Concept Elements of Entry Level Academic. The European Journal of Social & Behavioural Sciences, 25(2), 2927–2932. https://doi.org/10.15405/ejsbs.255

Balçıkanlı, C. (2011). Metacognitive Awareness Inventory for Teachers (MAIT). Electronic Journal of Research in Educational Psychology, 9(25), 1309–1332. https://doi.org/10.25115/ejrep.v9i25.1620

Bartlett, M. S. (1954). A further note on the multiplying factors for various chi-square approximations in factor analysis. Journal of the Royal Statistical Society, Series B (Methodological), 16(2), 296–298. https://doi.org/10.1111/j.2517-6161.1954.tb00174.x

Becker, R. (2022). Gender and survey participation: An event-history analysis of the gender effects of survey participation in a probability-based multi-wave panel study with a sequential mixed-mode design. Methods, Data, Analyses, 16(1), 3–32. https://doi.org/10.12758/mda.2021.08

Boekaerts, M., & Corno, L. (2005). Self-regulation in the classroom: A perspective on assessment and intervention. Applied Psychology: An International Review, 54(2), 199–231. https://doi.org/10.1111/j.1464-0597.2005.00205.x

Briggs, S. R., & Cheek, J. M. (1986). The role of factor analysis in the development and evaluation of personality scales. Journal of personality, 54(1), 106–148. https://doi.org/10.1111/j.1467-6494.1986.tb00391.x

Brown, T. A. (2015). Confirmatory factor analysis for applied research (2nd ed.). New York, NY: Guilford Press.

Byrne, B. M. (2010). Structural equation modeling with AMOS: Basic concepts, applications, and programming. New York, NY: Routledge.

Cano, F. (2006). An in-depth analysis of the Learning and Study Strategies Inventory (LASSI). Educational and Psychological Measurement, 66(6), 1023–1038. https://doi.org/10.1177/0013164406288167

Chang, M.-M. (2010). Effects of self-monitoring on web-based language learner’s performance and motivation. CALICO Journal, 27(2), 298–310. http://www.jstor.org/stable/calicojournal.27.2.298

Clark, L. A., & Watson, D. (1995). Constructing validity: Basic issues in objective scale development. Psychological Assessment, 7(3), 309–319. https://psycnet.apa.org/doi/10.1037/1040-3590.7.3.309

Credé, M., & Phillips, L. A. (2011). A meta-analytic review of the Motivated Strategies for Learning Questionnaire. Learning and Individual Differences, 21(3), 337–346. http://dx.doi.org/10.1016/j.lindif.2011.03.002

de Araujo, J., Gomes, C., & Jelihovschi, E. (2023). The factor structure of the Motivated Strategies for Learning Questionnaire (MSLQ): New methodological approaches and evidence. Psicologia: Reflexão e Crítica, 36, Article 38. https://doi.org/10.1186/s41155-023-00280-0

Dunn, K. E., Lo, W.-J., Mulvenon, S. W., & Sutcliffe, R. (2012). Revisiting the Motivated Strategies for Learning Questionnaire: A theoretical and statistical reevaluation of the metacognitive self-regulation and effort regulation subscales. Educational and Psychological Measurement, 72(2), 312–331. https://doi.org/10.1177/0013164411413461

Field, A. (2013). Discovering statistics using IBM SPSS statistics. SAGE Publications Ltd.

Fong, C. J., Krou, M. R., Johnston-Ashton, K., Hoff, M. A., Lin, S., & Gonzales, C. (2021). LASSI’s great adventure: A meta-analysis of the Learning and Study Strategies Inventory and academic outcomes. Educational Research Review, 34, Article 100407. https://doi.org/10.1016/j.edurev.2021.100407

Gorsuch, R. L. (2014). Factor analysis: Classic edition. New York: Routledge.

Green, K. E. (1996). Sociodemographic factors and mail survey response. Psychology & Marketing, 13(2), 171–184. https://doi.org/10.1002/(SICI)1520-6793(199602)13:2%3C171::AID-MAR4%3E3.0.CO;2-C

Hair, J., Black, W. C., Babin, B. J., & Anderson, R. E. (2010). Multivariate data analysis (7th ed.). Upper Saddle River, NJ: Pearson Educational International.

Harrison, G., & Vallin, L. (2018). Evaluating the Metacognitive Awareness Inventory using empirical factor-structure evidence. Metacognition and Learning, 13, 15–38. https://doi.org/10.1007/s11409-017-9176-z

Holec, H. (1981). Autonomy in foreign language learning. Oxford, UK: Pergamon Press.

Hrbáčková, K., Hladík, J., Vávrová, S., & Švec, V. (2011). Development of the Czech version of the questionnaire of self-regulated learning of students. The New Educational Review, 26(4), 33–44.

UNESCO Institute for Statistics. (2012). International Standard Classification of Education (ISCED) 2011. https://unesdoc.unesco.org/ark:/48223/pf0000219109

Izquierdo, I., Olea, J., & Abad, F. J. (2014). Exploratory factor analysis in validation studies: Uses and recommendations. Psicothema, 26(3), 395–400. https://doi.org/10.7334/psicothema2013.349

Kahn, J. H. (2006). Factor analysis in counseling psychology research, training, and practice: Principles, advances, and applications. The Counseling Psychologist, 34(5), 684–718. https://doi.org/10.1177/0011000006286347

Kaiser, H. F. (1974). An index of factorial simplicity. Psychometrika, 39(1), 31–36. https://doi.org/10.1007/BF02291575

Kallio, H., Virta, K., & Kallio, M. (2018). Modelling the components of metacognitive awareness. International Journal of Educational Psychology, 7(2), 94–122. https://doi.org/10.17583/ijep.2018.2789

Karami, H. (2015). Exploratory factor analysis as a construct validation tool: (Mis)applications in applied linguistics research. TESOL Journal, 6(3), 476–498. https://doi.org/10.1002/tesj.176

Knowles, M. S., Holton, E. F., & Swanson, R. A. (2011). The adult learner. UK: Elsevier, Butterworth-Heinemann.

Liu, J., Xiang, P., McBride, R., & Chen, H. (2019). Psychometric properties of the cognitive and metacognitive learning strategies scales among preservice physical education teachers: A bifactor analysis. European Physical Education Review, 25(3), 616–639. https://doi.org/10.1177/1356336X18755087

Magno, C. (2011). Validating the academic self-regulated learning scale with the motivated strategies for learning questionnaire (MSLQ) and learning and study strategies inventory (LASSI). The International Journal of Educational and Psychological Assessment, 7(2), 56–73.

Marland, J., Dearlove, J., & Carpenter, J. (2015). LASSI: An Australian evaluation of an enduring study skills assessment tool. Journal of Academic Language and Learning, 9(2), A32-A45. https://journal.aall.org.au/index.php/jall/article/view/327

Masoodi, M. (2019). An investigation into metacognitive awareness level: A comparative study of Iranian and Lithuanian university students. New Educational Review, 56(2), 148–160.

Meijs, C., Neroni, J., Gijselaers, H. J. M., Leontjevas, R., Kirschner, P. A., & De Groot, R. H. M. (2019). Motivated Strategies for Learning Questionnaire part B revisited: New subscales for an adult distance education setting. The Internet and Higher Education, 40, 1–11. https://doi.org/10.1016/j.iheduc.2018.09.003

Mundfrom, D. J., Shaw, D. G., & Ke, T. L. (2005). Minimum sample size recommendations for conducting factor analyses. International Journal of Testing, 5(2), 159–168. https://doi.org/10.1207/s15327574ijt0502_4

Národní pedagogický institut České republiky. (2015). Nová klasifikace ISCED 2011. Retrieved from https://www.npi.cz

NATO Standardization Office. (2016). NATO STANAG 6001 Ed. 5. https://www.natobilc.org/files/ATrainP-5%20EDA%20V2%20E.pdf

Nemoto, T., & Beglar, D. (2014). Developing Likert-scale questionnaires. In N. Sonda & A. Krause (Eds.), JALT2013 Conference Proceedings (pp. 1–8). Tokyo: JALT.

Nosratinia, M., Saveiy, M., & Zaker, A. (2014). EFL learners’ self-efficacy, metacognitive awareness, and use of language learning strategies: How are they associated? Theory and Practice in Language Studies, 4(5), 1080–1092. https://doi.org/10.4304/tpls.4.5.1080-1092

Nunnally, J. (1978). Psychometric theory (2nd ed.). New York, NY: McGraw-Hill.

Panadero, E. (2017). A review of self-regulated learning: Six models and four directions for research. Frontiers in Psychology, 8, Article 422. https://doi.org/10.3389/fpsyg.2017.00422

Pawlak, M. (2016). Teaching foreign languages to adult learners: Issues, options, and opportunities. Theoria Et Historia Scientiarum, 12, 45–65. https://doi.org/10.12775/ths.2015.004

Pintrich, P. R. (2000). The role of goal orientation in self-regulated learning. In M. Boekaerts, P. R. Pintrich, & M. Zeidner (Eds.), Handbook of self-regulation (pp. 451–502). San Diego, CA: Academic Press.

Pintrich, P. R., & de Groot, E. V. (1990). Motivated Strategies for Learning Questionnaire (MSLQ) [Database record]. APA PsycTests. https://doi.org/10.1037/t09161-000

Pintrich, P. R., Smith, D. A., Garcia, T., & McKeachie, W. J. (1991). A manual for the use of the motivated strategies for learning. Michigan: School of Education Building, The University of Michigan. ERIC database number: ED338122.

Preston, C. C., & Colman, A. M. (2000). Optimal number of response categories in rating scales: Reliability, validity, discriminating power, and respondent preferences. Acta Psychologica, 104(1), 1–15. https://doi.org/10.1016/S0001-6918(99)00050-5

Ramírez Echeverry, J. J., García Carrillo, À., & Olarte Dussan, F. A. (2016). Adaptation and validation of the motivated strategies for learning questionnaire-MSLQ-in engineering students in Colombia. International Journal of Engineering Education, 32(4), 1774–1787.

Rock, M. L. (2005). The use of strategic self-monitoring to enhance academic engagement, productivity, and accuracy in students with and without exceptionalities. Journal of Positive Behavior Interventions, 7(1), 3–17. https://doi.org/10.1177/10983007050070010201

Roth, A., Ogrin, S., & Schmitz, B. (2016). Assessing self-regulated learning in higher education: A systematic literature review of self-report instruments. Educational Assessment, Evaluation and Accountability, 28(1), 1–29. https://doi.org/10.1007/s11092-015-9229-2

Schraw, G., & Dennison, R. S. (1994). Assessing metacognitive awareness. Contemporary Educational Psychology, 19(4), 460–475. https://doi.org/10.1006/ceps.1994.1033

Siqueira, M., Gonçalves, J., Mendonça, V., Kobayasi, R., Arantes-Costa, F., Tempski, P., & Martins, M. (2020). Relationship between metacognitive awareness and motivation to learn in medical students. BMC Medical Education, 20, Article 318. https://doi.org/10.1186/s12909-020-02318-8

Sperling, R. A., Howard, B. C., Miller, L. A., & Murphy, C. (2002). Measures of children’s knowledge and regulation of cognition. Contemporary Educational Psychology, 27(1), 51–79. https://doi.org/10.1006/ceps.2001.1091

Soemantri, D., McColl, G., & Dodds, A. (2018). Measuring medical students’ reflection on their learning: Modification and validation of the motivated strategies for learning questionnaire (MSLQ). BMC Medical Education, 18, Article 274. https://doi.org/10.1186/s12909-018-1384-y

Susnea, S., Cretu, C. M., Lomos, C., & Susnea, I. (2025). Adaptation and psychometric properties of a Romanian version of Metacognitive Awareness Inventory for Teachers (RoMAIT). Education Sciences, 15(5), Article 583. https://doi.org/10.3390/educsci15050583

Tabachnick, B. G., & Fidell, L. S. (2007). Using multivariate statistics (5th ed.). Boston, MA: Pearson.

Watkins, M. W. (2018). Exploratory factor analysis: A guide to best practice. Journal of Black Psychology, 44(3), 219–246. https://doi.org/10.1177/0095798418771807

Weinstein, C. E., & Palmer, D. R. (1990). LASSI-HS Learning and Study Strategies Inventory High School. Clearwater, FL: H&H Publishing.

Weinstein, C. E., Palmer, D. R., & Schulte, A. C. (2006). LASSI Online Learning and Study Strategies Inventory. Clearwater, FL: H&H Publishing.

Weinstein, C. E., Schulte, A., & Palmer, D. (1987). LASSI: Learning and Study Strategies Inventory. Clearwater, FL: H&H Publishing.

Weinstein, C. E., Palmer, D. R., & Acee, T. W. (2016). Learning and Study Strategies Inventory (LASSI): User’s Manual (3rd ed.). Clearwater, FL: H&H Publishing. https://www.hhpublishing.com/LASSImanual.pdf

Winne, P. H. (2001). Self-regulated learning viewed from models of information processing. In B. J. Zimmerman & D. H. Schunk (Eds.), Self-regulated learning and academic achievement: Theoretical perspectives (2nd ed., pp. 153–189). Mahwah, NJ: Lawrence Erlbaum.

Winne, P. H. (2011). A cognitive and metacognitive analysis of self-regulated learning. In B. J. Zimmerman & D. H. Schunk (Eds.), Handbook of Self-Regulation of Learning and Performance (pp. 15–32). New York, NY: Routledge.

Winne, P. H., & Perry, N. E. (2000). Measuring self-regulated learning. In M. Boekaerts, P. R. Pintrich, & M. Zeidner (Eds.), Handbook of Self-Regulation (pp. 531–566). Orlando, FL: Academic Press.

Young, A., & Fry, J. D. (2008). Metacognitive awareness and academic achievement in college students. Journal of the Scholarship of Teaching and Learning, 8(2), 1–10.

Zhang, L. (2010). Do thinking styles contribute to metacognition beyond self-rated abilities? Educational Psychology, 30(4), 481–494. https://doi.org/10.1080/01443411003659986

Zhou, Y., & Wang, J. (2021). Psychometric properties of the MSLQ-B for adult distance education in China. Frontiers in Psychology, 12, Article 620564. https://doi.org/10.3389/fpsyg.2021.620564

Zimmerman, B. J. (2000). Attaining self-regulation: A social cognitive perspective. In M. Boekaerts, P. R. Pintrich, & M. Zeidner (Eds.), Handbook of Self-Regulation (pp. 13–39). San Diego, CA: Academic Press. https://doi.org/10.1016/B978-012109890-2/50031-7

Zimmerman, B. J., & Martínez Pons, M. (1986). Development of a structured interview for assessing student use of self-regulated learning strategies. American Educational Research Journal, 23(4), 614–628. https://doi.org/10.2307/1163093